This set of MCQ(multiple choice questions) focuses on the Deep Learning Week 6 answers

Course layout

Answers COMING SOON! Kindly Wait!

Week 1: Assignment Answers

Week 2: Assignment Answers

Week 3: Assignment Answers

Week 4: Assignment Answers

Week 5: Assignment Answers

Week 6: Assignment Answers

Week 7: Assignment Answers

Week 8: Assignment Answers

Week 9: Assignment Answers

Week 10: Assignment Answers

Week 11: Assignment Answers

Week 12: Assignment Answers

NOTE: You can check your answer immediately by clicking show answer button. This set of “Deep Learning week 6 answers” contains 10 questions.

Now, start attempting the quiz.

Deep Learning week 6 answers

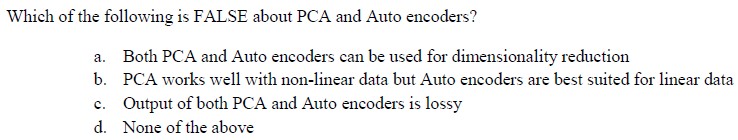

Q1. Which of the following is FALSE about PCA and Autoencoders?

a) PCA works well with non-linear data but Autoencoders are best suited for linear data.

b) Output of both PCA and Autoencoders is lossy.

c) Both PCA and Autoencoders can be used for dimensionality reduction.

d) None of the above.

Answer: a) PCA works well with non-linear data but Autoencoders are best suited for linear data.

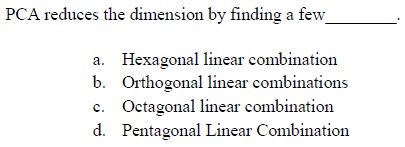

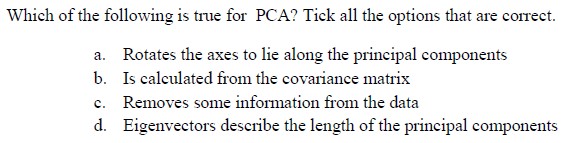

Q2. Which of the following is not true for PCA? Tick all the options that are correct.

a) Rotates the axes to lie along the prinicpal components

b) Is calculated from the covariance matrix

c) Removes some information from the data

d) Eigenvectors describe the length of the principal components

Answer: d) Eigenvectors describe the length of the principal components

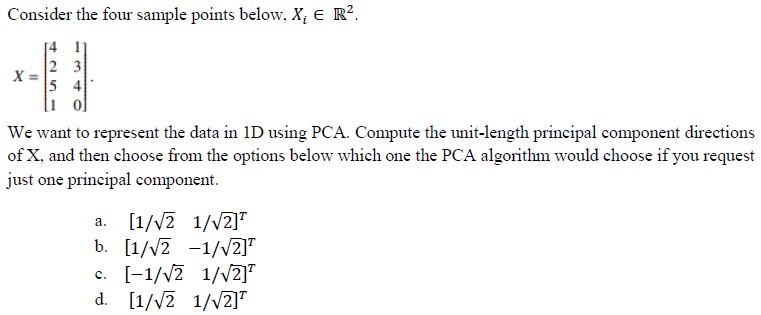

Q3. Consider the four sample points below, Xi E R2.

Answer: a)

Q4. Given input x and linear autoencoder (no bias) with random weights (W for encoder and W’ for decoder), what mathematical form is minimized to achieve optimal weights?

a)|x – (W’ . W . x)|

b)|x – (W . W’ . x)|

c)|x – (W . W . x)|

d)|x – (W’ . W’ . x)|

Answer: b)

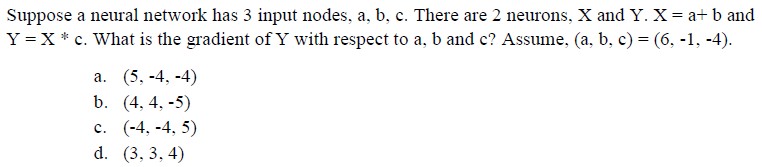

Q5. Suppose a neural network has 3 input nodes, a, b, c. There are 2 neurons, X and F. X = a+2b+4c and F = 2X+1. What is the output F when input (a, b, c) = (-6, 1, 2).

a) 5

b) 4

c) 9

d) 8

Answer: c) 9

Q6. Suppose a neural network has 3 input nodes, a, b, c. There are 2 neurons, X and F. X = a+2b+4c and F=2x+1. What is the gradient of F with respect to a, b and c? Assume, (a, b, c) = (-6, 1, 2).

a) (2, 4, 8)

b) (1, 2, 4)

c) (-1, -2, -4)

d) (2, 2, 4)

Answer: a) (2, 4, 8)

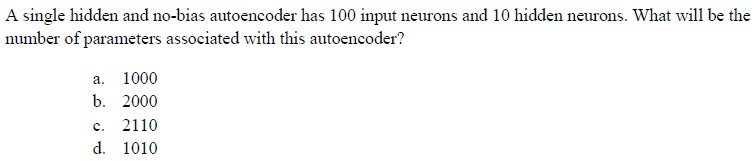

Q7. A single hidden and no-bias autoencoder has 100 input neurons and 10 hidden neurons. What will be the number of parameters associated with this autoencoder?

a) 1000

b) 2000

c) 2110

d) 1010

Answer: b) 2000

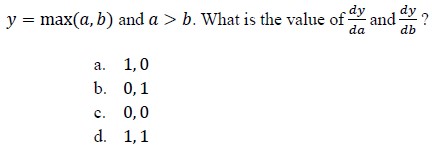

Q8. y = min(a,b) and a>b. What is the value of dy/da and dy/db?

a) 1, 0

b) 0, 1

c) 0, 0

d) 1, 1

Answer: b) 0, 1

Q9. When tanh(x) = T and sigmoid(x) = S which of the following satisfies their relationship?

Answer: c)

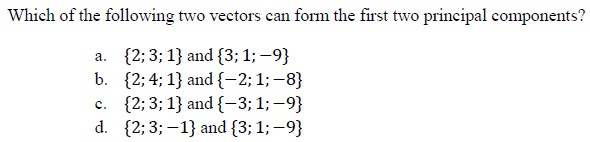

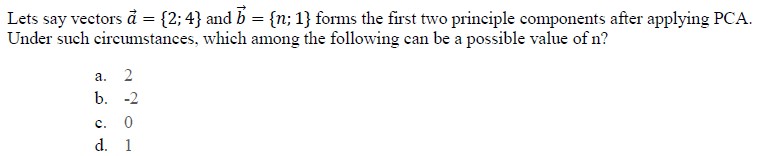

Q10. Which of the following two vectors can form the first two principal components?

a) {2; 3; 1} and {3; 1; -9}

b) {2; 4; 1} and {-2; 1; -8}

c) {2; 3; 1} and {-3; 1; -9}

d) {2; 3; -1} and {3; 1; -9}

Answer: b)

Previous – Deep Learning Week 6 answers

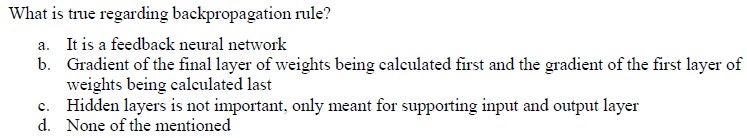

Q1.

Answer: b)

Q2.

Answer: a)

Q3.

Answer: b)

Q4.

Answer: a)

Q5.

Answer: b)

Q6.

Answer: b)

Q7.

Answer: a), b), c)

Q8.

Answer: b)

Q9.

Answer: a)

Q10.

Answer: b)

<< Prev: Deep Learning NPTEL Week 5 Answers

>> Next: Deep Learning NPTEL Week 7 Answers

Disclaimer: Quizermaina doesn’t claim these answers to be 100% correct. Use these answers just for your reference. Always submit your assignment as per the best of your knowledge.

For discussion about any question, join the below comment section. And get the solution of your query. Also, try to share your thoughts about the topics covered in this particular quiz.