This set of MCQ(multiple choice questions) focuses on the Deep Learning NPTEL Week 9 answers

Course layout

Answers COMING SOON! Kindly Wait!

Week 1: Assignment Answers

Week 2: Assignment Answers

Week 3: Assignment Answers

Week 4: Assignment Answers

Week 5: Assignment Answers

Week 6: Assignment Answers

Week 7: Assignment Answers

Week 8: Assignment Answers

Week 9: Assignment Answers

Week 10: Assignment Answers

Week 11: Assignment Answers

Week 12: Assignment Answers

NOTE: You can check your answer immediately by clicking show answer button. This set of “Deep Learning NPTEL Week 9 answers ” contains 10 questions.

Now, start attempting the quiz.

Deep Learning NPTEL 2022 Week 9 answers

Q1. What can be a possible consequence of choosing a very small learning rate?

a) Slow convergence

b) Overshooting minima

c) Oscillations around the minima

d) All of the above

Answer: a) Slow convergence

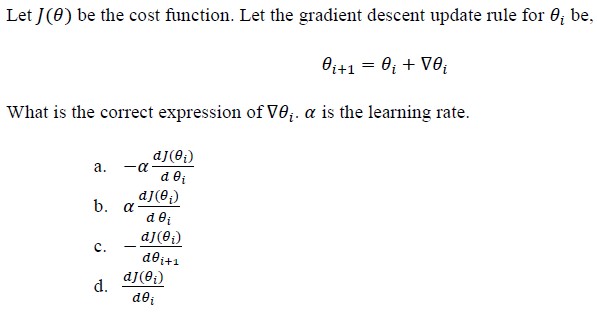

Q2.

Answer: a)

Q3. Which of the following is true about momentum optimizer?

a) It helps accelerating Stochastic Gradient Descent in right direction

b) It helps prevent unwanted oscillations

c) It helps to know the direction of the next step with knowledge of the previous step

d) All of the above

Answer: d) All of the above

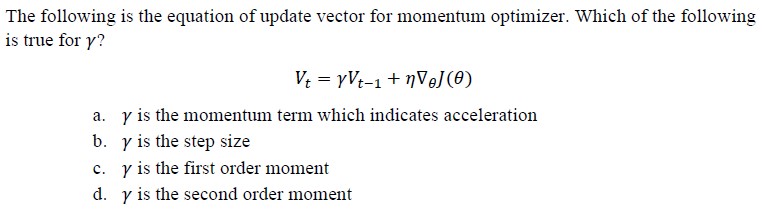

Q4.

Answer: a)

Q5. A given cost function is of the form J(θ) 6θ2 – 6θ + 6? What is the weight update rule for gradient descent optimization at step t+1? consider, α to be the learning rate.

a) θt+1 = θt – 6α(2θ – 1)

b) θt+1 = θt + 6α(2θ)

c) θt+1 = θt – α(12θ – 6 + 6)

d) θt+1 = θt – 6α(2θ + 1)

Answer: a)

Q6. If the first few iterations of gradient descent cause the function f(θ0, θ1) to increase rather than decrease, then what could be the most likely cause for this?

a) we have set the learning rate to too large a value

b) we have set the learning rate to zero

c) we have set the learning rate to a very small value

d) learning rate is gradually decreased by a constant value after every epoch

Answer: a) we have set the learning rate to too large a value

Q7. For a function f(θ0, θ1), if θ0 and θ1 are initialized at a global minimum, then what should be the value of θ0 and θ1 after a single iteration of gradient descent?

a) θ0 and θ1 will update as per gradient descent rule

b) θ0 and θ1 will remain same

c) Depends on the values of θ0 and θ1

d) Depends on the learning rate

Answer: b) θ0 and θ1 will remain same

Q8. What can be one of the practical problems of exploding gradient?

a) Too large update of weight values leading to unstable network

b) Too large update of weight values inhibiting the network to learn

c) Too large update of weight values leading to faster convergence

d) Too small update of weight values leading to slower convergence

Answer: a) Too large update of weight values leading to unstable network

Q9. What are the steps for using a gradient descent algorithm?

1. Calculate error between the actual value and the predicted value

2. Update the weights and biases using gradient descent formula

3. Pass an input through the network and get values from output layer

4. Initialize weights and biases of the network with random values

5. Calculate gradient value corresponding to each weight and bias

a) 1, 2, 3, 4, 5

b) 5, 4, 3, 2, 1

c) 3, 2, 1, 5, 4

d) 4, 3, 1, 5, 2

Answer: d) 4, 3, 1, 5, 2

Q10. You run gradient descent for 15 iterations with learning rate n=0.3 and compute error after each iteration. You find that the value of error decreases very slowly. Based on this, which of the following conclusions seems most plausible?

a) Rather than using the current value of a, use a larger value of n

b) Rather than using the current value of a, use a smaller value of n

c) Keep n=0.3

d) None of the above

Answer: a)

<< Prev: Deep Learning NPTEL Week 8 Answers

>> Next: Deep Learning NPTEL Week 10 Answers

Disclaimer: Quizermaina doesn’t claim these answers to be 100% correct. Use these answers just for your reference. Always submit your assignment as per the best of your knowledge.

For discussion about any question, join the below comment section. And get the solution of your query. Also, try to share your thoughts about the topics covered in this particular quiz.